Abstract

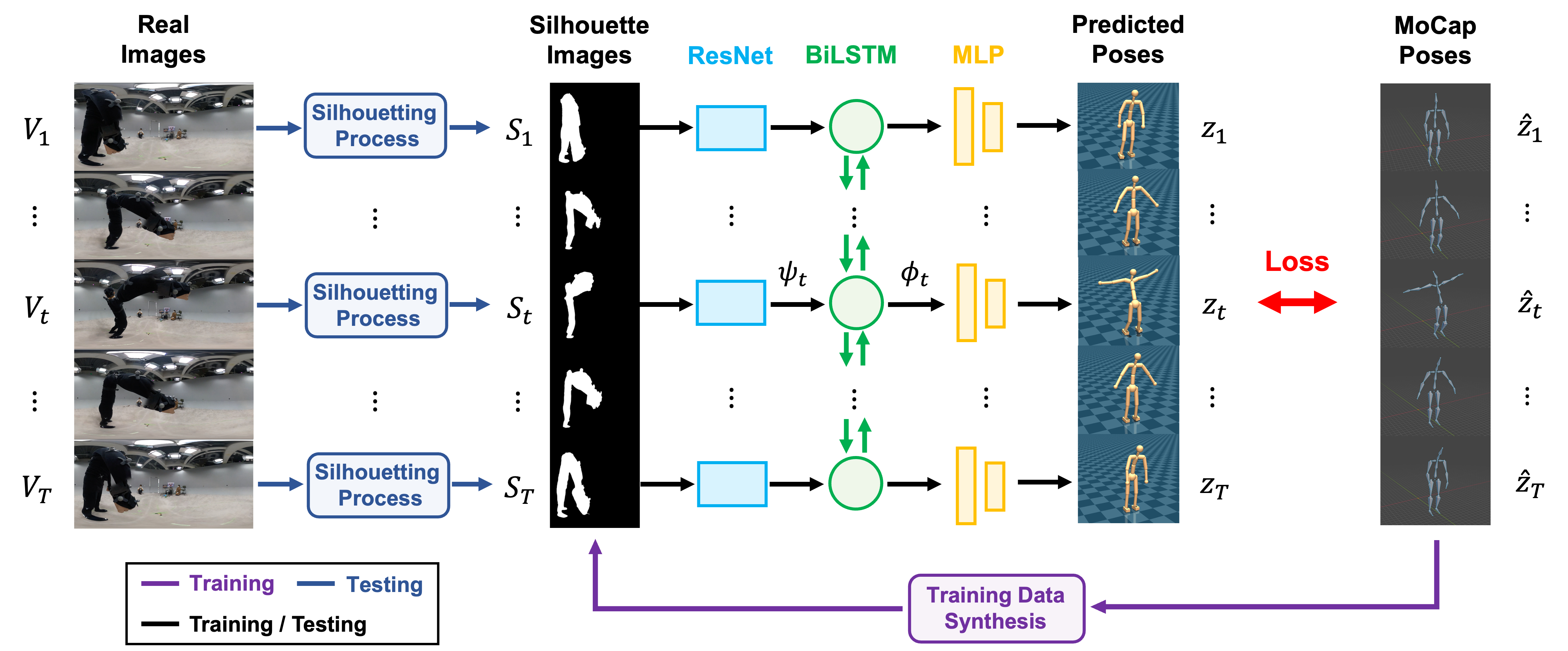

In this paper, we propose a framework for 3D human pose estimation using a single 360° camera mounted on the user's wrist. Perceiving a 3D human pose with such a simple setup has remarkable potential for various applications (e.g., daily-living activity monitoring, motion analysis for sports training). However, no existing method has tackled this task due to the difficulty of estimating a human pose from a single camera image in which only a part of the human body is captured, and because of a lack of training data. We propose a method for translating wrist-mounted 360° camera images into 3D human poses. Since we are the first to try this task, we cannot use existing datasets. To address this issue, we use synthetic data to build our own dataset. This solution, however, creates a different problem, that of a domain gap between synthetic data for training and real image data for inference. To resolve this problem, we propose silhouette-based synthetic data generation created for this task. Extensive experiments comparing our method with several baseline methods demonstrated the effectiveness of our silhouette-based pose estimation approach.

using a single wrist-mounted 360° camera